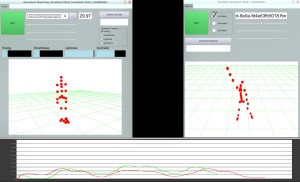

Similarity engine

The similarity engine can measure the similarity between dance performances modelled using movement features, i.e., numerical descriptors of the evolution of movement properties and qualities over time. Once a recording of interest (query) is identified in the library, it allows to search for similar motion sequences in relation to a range of user-defined criteria. In the derived similarity search web-based application, the user should first (1) select a 5s excerpt from the query segment and (2) specify (a) the features to take in consideration and, for each one, (b) a 0 to 1 value representing its relevance in the estimation of global similarity. The algorithm then assigns to each recording in the database a value indicating its similarity to the query and builds a global ranking, returning a list of recordings sorted according to their similarity value. The user can watch the query and the output segments simultaneously to visualise the comparison.

Movement sketching tool

The tool allows the user to record one’s movement through low-cost sensors (e.g., xOSC, Notch), analyse it in relation to a selection of expressive movement qualities of choice and search for similar movements through the similarity engine. In this way, the tool compares the user’s movements with those of professional dancers stored in the library, and makes query results available in standard or VR-based visualisations, or also audible through sonification.

Real-time mobile movement search application

The application allows the user to record movements and perform similarity search within the movement library through any mobile device, such as a smartphone or tablet. In the minimal configuration, the user points the mobile or tablet camera towards a moving person, and the movements are used as query for similarity search, returning a stream of images corresponding to similar movements in the repository. In a more extensive setup, the search results can be projected onto a wall using a beamer, to visualise search results in real time.

Blending engine

The blending engine is an interactive tool for composition and blending of MoCap data available in the movement library to create motion sequences. Sequences can be assembled in a linear setup, i.e., blending movements consecutively in time to form a longer, seamless sequence, or in parallel, i.e., superposing parts of movements to form new movements, e.g., with the leg movement of one sequence and the upper body part of another. Users can assemble choreographies or blend sequences and save those as new FBX files. These files can be read and displayed inside the Unity engine using any of the created avatars (Choreomorphy).