WhoLoDancE movement library and annotator

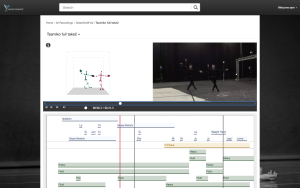

The WhoLoDancE movement library (WML) constitutes the web-based system to access and navigate the dance motion repository of synchronized multimodal recordings (MoCap and video) created during motion capture recording sessions, as segmented and annotated by Consortium dance experts. It consists of a web-based interface, data, metadata – including title, genre, annotations, performer, dance company and date of recording -, annotation management back-end as well as a user-management system.

The system allows the user to browse recordings or search for specific ones by metadata- and annotation-related keywords, as well as to create personal playlists. For each recording, the user can visualise the video of the performance beside the corresponding MoCap-derived 3D avatar and interact with it, e.g., by rotating the avatar to observe the movement from different perspectives. A timeline shows the sequence progressing and evolution of associated actions (e.g., step, arm/leg gesture, change of support), movement principle states (e.g., in focus, symmetrical/asymmetrical, in balance) and movement qualities (e.g., heavy, fluid, sudden).

The annotation tool, embedded into the WML, enables manual annotation of performances with free text and controlled vocabularies based on the conceptual framework of WhoLoDancE, through a tabular and a timeline view.

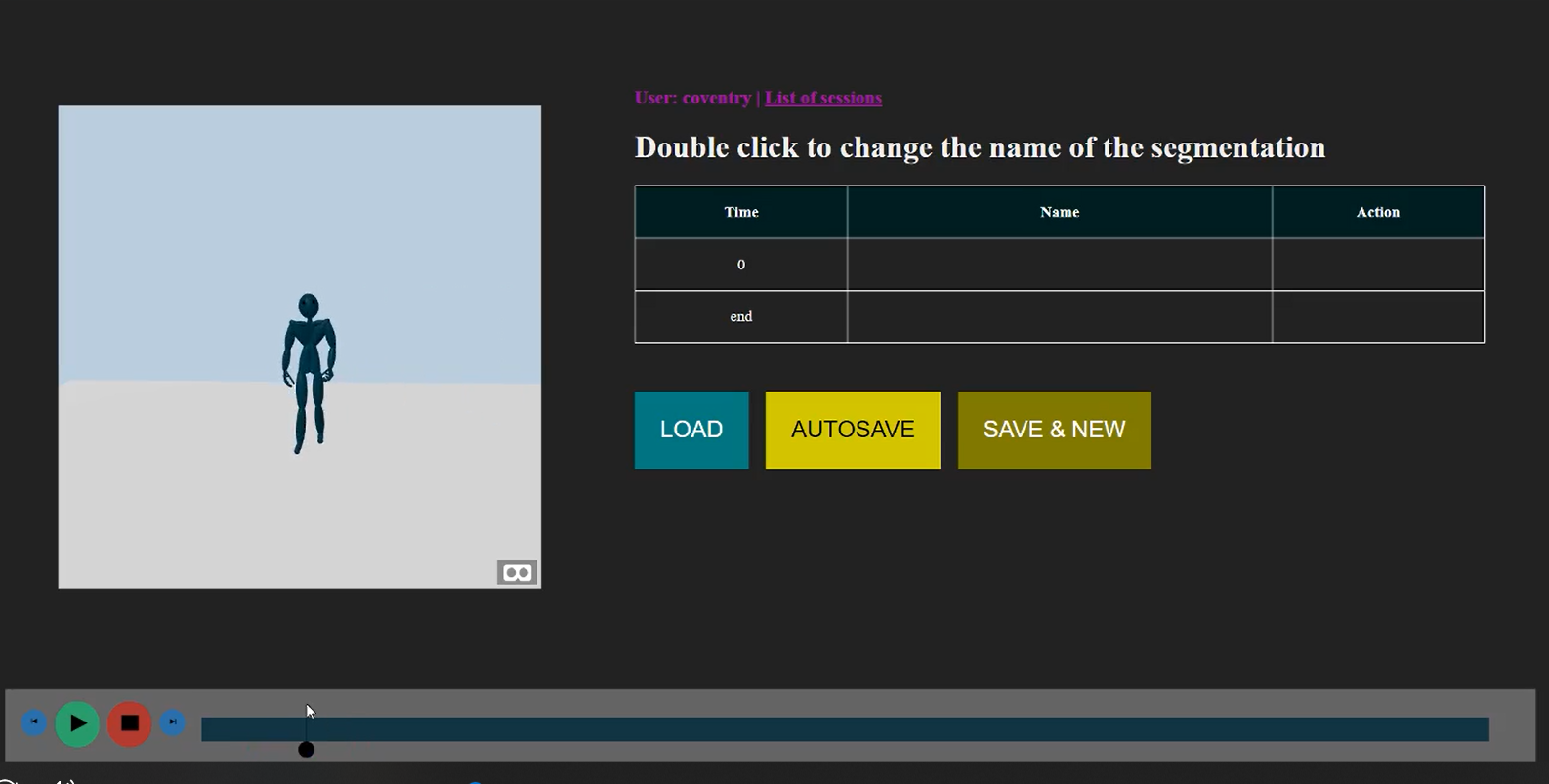

Segmentation tool

The segmentation tool is the web-based application which allows manual segmentation of motion MoCap sequences into simpler movement segments. The tool includes three interconnected modules: a 3D scene where the user can rotate the scene, zoom in/out and switch to/from full-screen view, with the avatar changing colour at the beginning of a new segment; a player to follow the execution or jump to specific frames of interest, showing segments as coloured progress bars; a table to show the annotated segments, with labels and commands to add or modify annotations.

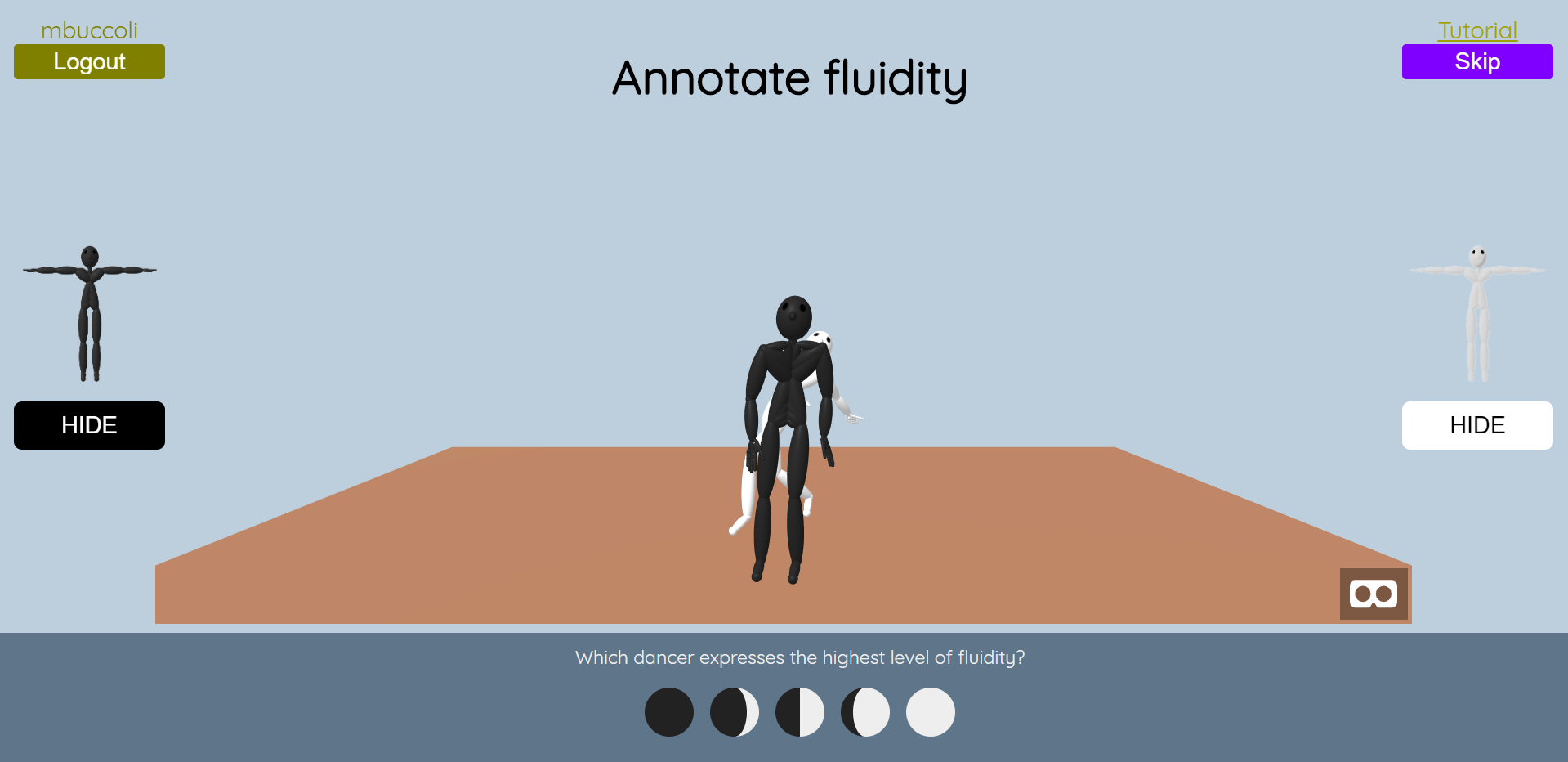

Movement quality annotation by comparison tool

The movement quality annotation by comparison (MQA) tool is meant to make the annotation procedure sensibly lighter and easier, allowing experts from the dance community to contribute with little or no training. With this tool, we aim to collect a high amount of annotations from a large community, to be used for the training of algorithms able to automatically describe dance performances. Given a movement quality, say fluidity, the tool displays a 3D representation of two short dance movements represented by a black and a white avatar, in a loop, and asks the user to make a comparison between them and select which one is expressing a higher level of fluidity. Users can choose between five levels, or even skip the comparison if they decide the comparison is not meaningful or they do not feel confident enough to make a decision.

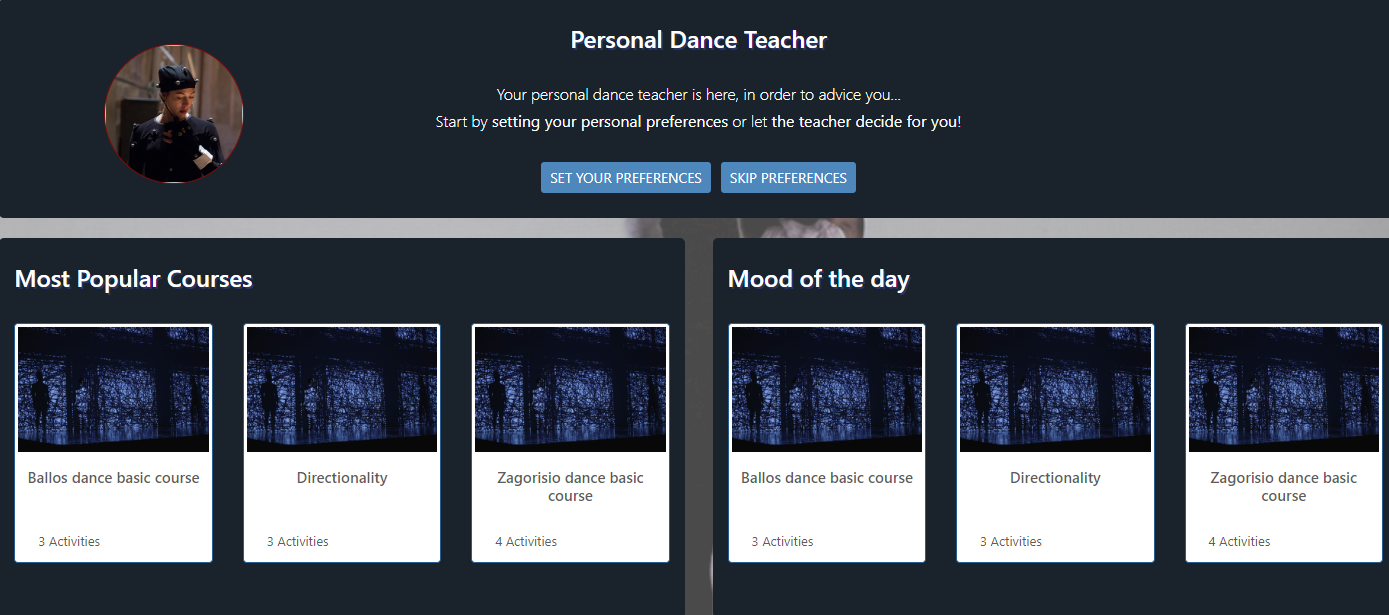

WhoLoDancE educational platform

The educational platform is a web-based application which showcases the various WhoLoDancE tools and scenarios from a pedagogic perspective. It complements the Movement Library by combining specific scenarios to design learning courses and activities for a particular dance genre (contemporary, ballet, flamenco, Greek folk), objective (movement principle, quality, actions), context (creativity, learning steps and forms, improvisation, etc.), and tool (Sonification, Choreomorphy, Annotator, Movement Sketching, Kinect based activities etc.). The learning activities described in the platform require specific hardware depending on the tool. As a proof of concept, specific examples of paedagogic scenarios were built for low-end devices, like e.g., Kinect, for interactive exercises relating to alignment and directionality.